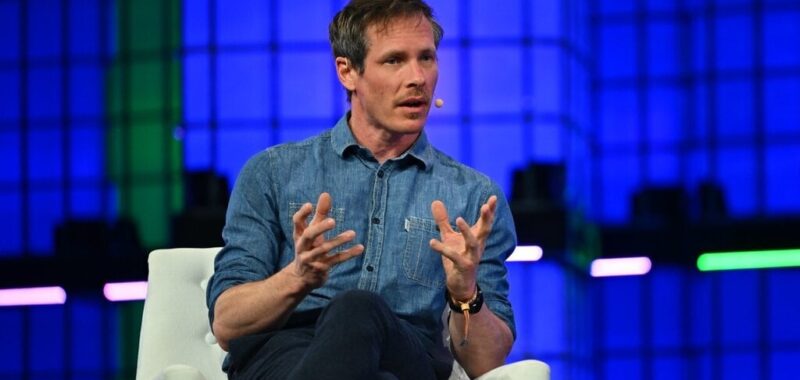

AI startup Hugging Face envisions that small—not large—language models will be used for applications including “next stage robotics,” its Co-Founder and Chief Science Officer Thomas Wolf said.

“We want to deploy models in robots that are smarter, so we can start having robots that are not only on assembly lines, but also in the wild,” Wolf said while speaking at Web Summit in Lisbon today. But that goal, he said, requires low latency. “You cannot wait two seconds so that your robots understand what’s happening, and the only way we can do that is through a small language model,” Wolf added.

Small language models “can do a lot of the tasks we thought only large models could do,” Wolf said, adding that they can also be deployed on-device. “If you think about this kind of game changer, you can have them running on your laptop,” he said. “You can have them running even on your smartphone in the future.”

Ultimately, he envisions small language models running “in almost every tool or appliance that we have, just like today, our fridge is connected to the internet.”

The firm released its SmolLM language model earlier this year. “We are not the only one,” said Wolf, adding that, “Almost every open source company has been releasing smaller and smaller models this year.”

He explained that, “For a lot of very interesting tasks that we need that we could automate with AI, we don’t need to have a model that can solve the Riemann conjecture or general relativity.” Instead, simple tasks such as data wrangling, image processing and speech can be performed using small language models, with corresponding benefits in speed.

The performance of Hugging Face’s LLaMA 1b model to 1 billion parameters this year is “equivalent, if not better than, the performance of a 10 billion parameters model of last year,” he said. “So you have a 10 times smaller model that can reach roughly similar performance.”

“A lot of the knowledge we discovered for our large language model can actually be translated to smaller models,” Wolf said. He explained that the firm trains them on “very specific data sets” that are “slightly simpler, with some form of adaptation that’s tailored for this model.”

Those adaptations include “very tiny, tiny neural nets that you put inside the small model,” he said. “And you have an even smaller model that you add into it and that specializes,” a process he likened to “putting a hat for a specific task that you’re gonna do. I put my cooking hat on, and I’m a cook.”

In the future, Wolf said, the AI space will split across two main trends.

“On the one hand, we’ll have this huge frontier model that will keep getting bigger, because the ultimate goal is to do things that human cannot do, like new scientific discoveries,” using LLMs, he said. The long tail of AI applications will see the technology “embedded a bit everywhere, like we have today with the internet.

Edited by Stacy Elliott.